Explanatory reasoning

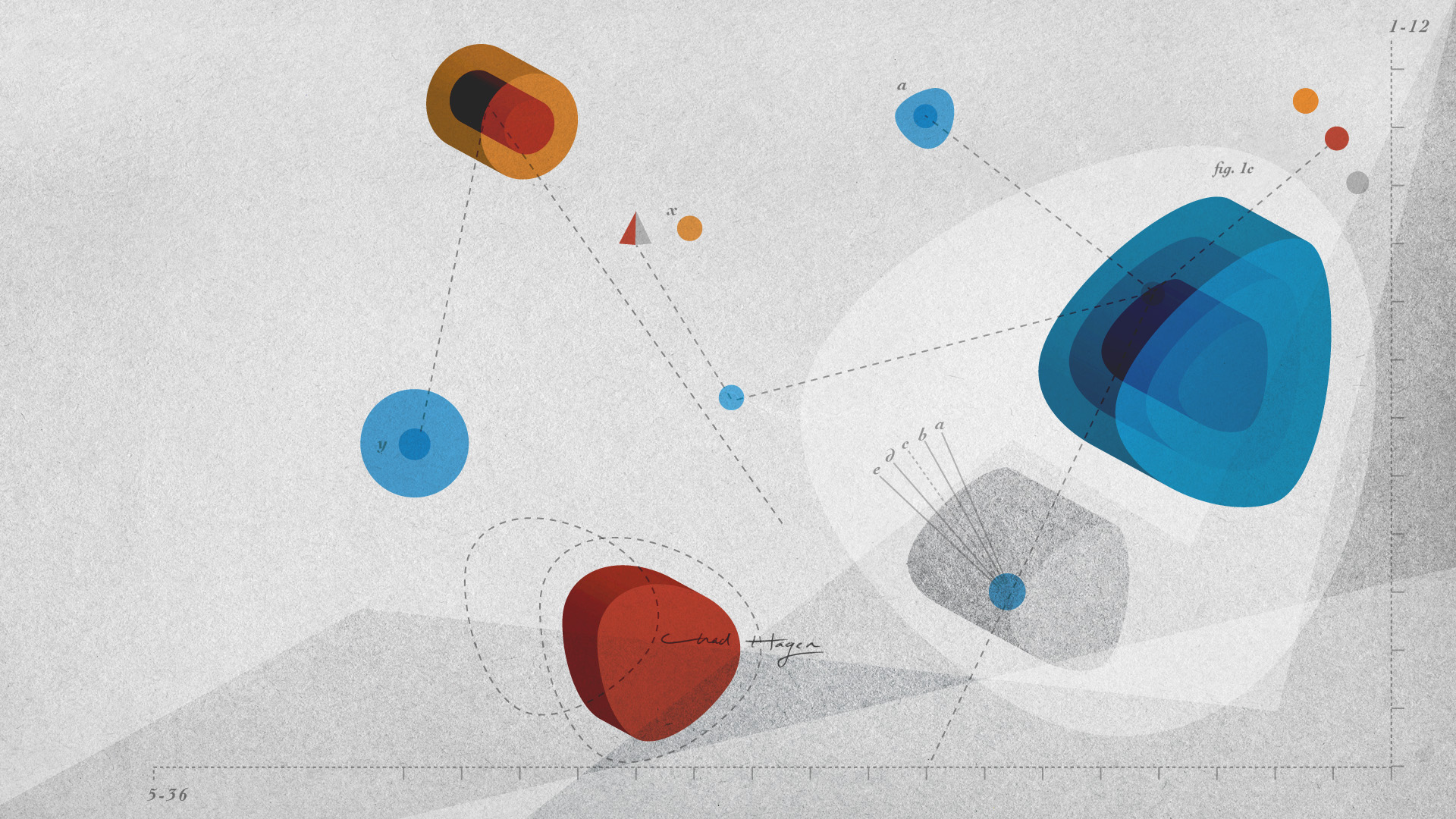

When reasoners realize that the information they have is incomplete, incoherent, or inconsistent, they will try to construct an explanatory mental model. A plausible explanation is likely to imply changes to beliefs. Those changes may be minimal or they may be drastic — either way, the explanation forces individuals to consider novel entities, properties, or relations, which in turn can be used to make predictions about the world.

A series of studies by Johnson-Laird, Girotto, Legrenzi, and Khemlani show that reasoners spontaneously generate explanations when they detect inconsistencies, and that they use those explanations in systematic ways: their explanations help reasoners refute and weaken general claims. The results contravene the notion of “minimalism” first proposed by William James. He argued that when people detect an inconsistency, they change their beliefs in the most minimal way possible. Studies, however, show that people are willing to make broad changes to their beliefs — so broad, in fact, that an available explanation makes it less likely that reasoners will judge inconsistent statements as being inconsistent.

The studies reveal both the usefulness and the danger of explanatory reasoning. Humans have a natural tendency to reason from inconsistency to consistency, and to rely on a procedure (called “abduction”) that generates explanations from their knowledge of causal relations. As a consequence, they overlook the inconsistency. In some situations, this behavior is practical, because it enables people to make sensible interpretations of generalizations and to understand discourse. In other situations, however, it may lead to striking lapses in reasoning. When a plausible explanation is available, regardless of whether it is true, reasoners may overlook inconsistencies and evaluate them in accordance with the explanation. In sum, to explain an inconsistency is often to explain it away.

Collaborators

Vittorio Girotto, Phil Johnson-Laird, Paulo Legrenzi, Sangeet Khemlani, Joanna Korman

Representative papers

- Johnson-Laird, P. N. (1983). Mental models: Towards a cognitive science of language, inference, and consciousness. Cambridge, MA: Harvard University Press.

- Johnson-Laird, P.N. (2006). How we reason. Oxford: Oxford University Press.

- Johnson-Laird, P. N., Girotto, V., & Legrenzi, P. (2004). Reasoning from inconsistency to consistency. Psychological Review, 111, 640-661.

- Khemlani, S., & Johnson-Laird, P. N. (2013). Cognitive changes from explanations. Journal of Cognitive Psychology, 25, 139-146.

- Khemlani, S. & Johnson-Laird, P. N. (2012). Hidden conflicts: Explanations make inconsistencies harder to detect. Acta Psychologica, 139, 486–491.

- Khemlani, S., & Johnson-Laird, P.N. (2011). The need to explain. Quarterly Journal of Experimental Psychology, 64, 2276-88.

- Korman, J., & Khemlani, S. (2020). Explanatory completeness. Acta Psychologica, 209, 103139.